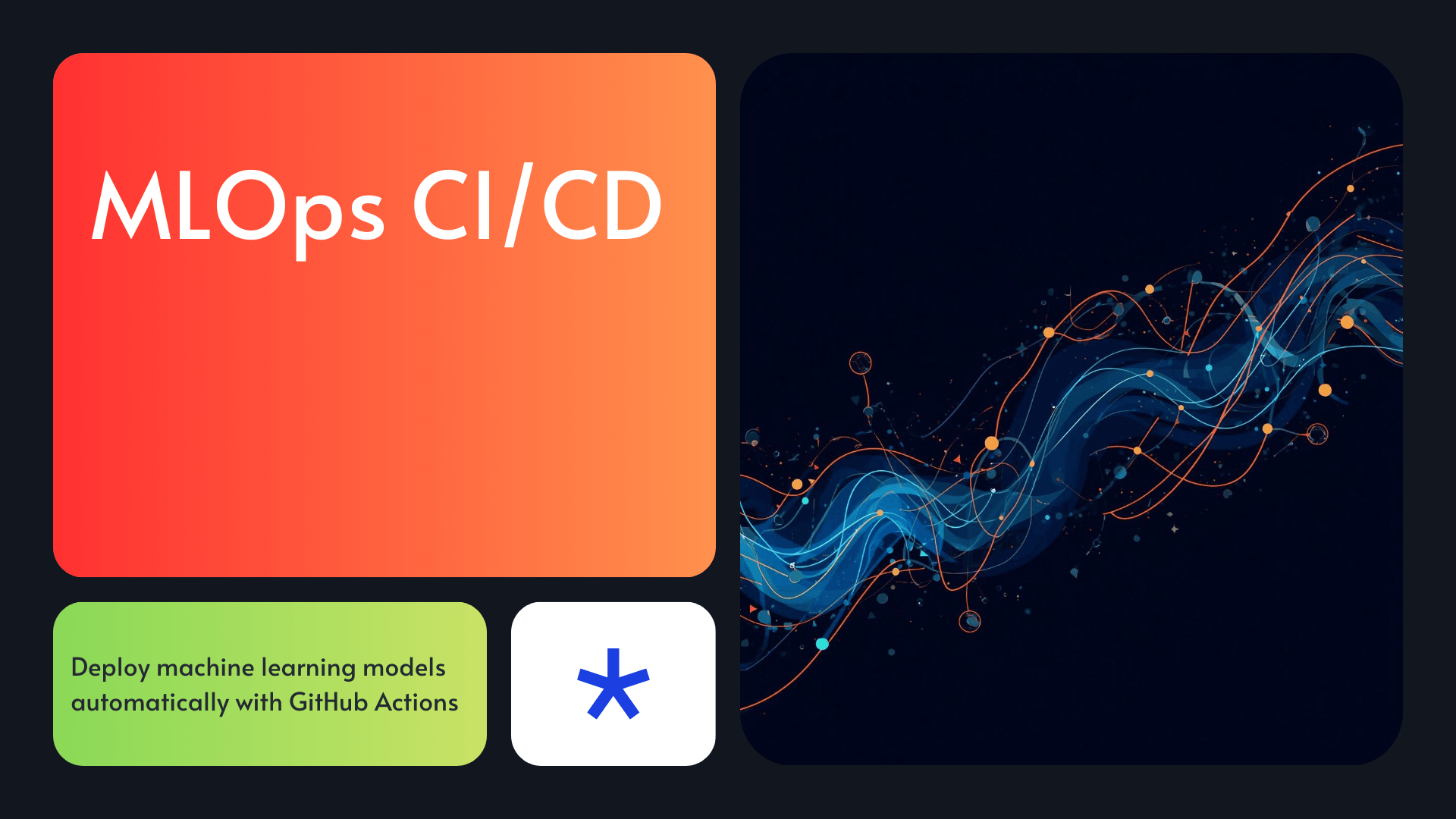

MLOps CI/CD: How to Deploy Machine Learning Models Using CI/CD Pipelines

Introduction

Are your machine learning models sitting idle after training? You are not alone. 🚀

Most teams train great models. But then they struggle to get those models into production. Deployments break. Results drift. Retraining is manual and painful. This is exactly why MLOps CI/CD exists.

MLOps applies DevOps principles to machine learning workflows. It automates model training, testing, versioning, and deployment. The core of any MLOps setup is your CI/CD pipeline. With the right pipeline, every model update triggers automated testing and deployment. No manual steps. No broken releases.

In this guide, you will learn how to build a complete MLOps pipeline from scratch. We use GitHub Actions, Terraform, and AWS SageMaker. Every section includes working code and real-world examples. Furthermore, you will learn how to monitor models after deployment. This guide covers everything from model versioning to production observability.

Devolity Business Solutions builds end-to-end MLOps pipelines for organizations transitioning to AI-driven products. Their certified engineers deploy scalable, automated ML infrastructure on AWS, Azure, and GCP.

First, let us start with the basics. What exactly is MLOps?

What Is MLOps?

MLOps stands for Machine Learning Operations. It is a set of practices that combine ML, DevOps, and data engineering. The goal is simple: deploy and maintain ML models reliably at scale.

Think of MLOps as the bridge between your data science team and production systems. Without it, models often live only in Jupyter notebooks. With it, models run in automated, monitored, production pipelines.

Core Components of MLOps

| Component | Description | Tool Examples |

|---|---|---|

| Model Versioning | Track model artifacts and datasets | DVC, MLflow, Git |

| CI/CD Pipelines | Automate train, test, and deploy | GitHub Actions, Jenkins |

| Model Registry | Store and manage model versions | MLflow, SageMaker |

| Monitoring | Track model performance post-deploy | Prometheus, Grafana, Evidently |

| Infrastructure as Code | Reproducible cloud setup | Terraform, CloudFormation |

Additionally, MLOps includes data versioning, experiment tracking, and feature stores. Each piece builds on the others. Therefore, a complete MLOps CI/CD setup requires all of them working together.

Why MLOps Matters Now

- 87% of ML projects never make it to production (VentureBeat, 2021)

- Manual deployments cause 60%+ of production ML failures

- MLOps reduces deployment time from weeks to hours

Consequently, companies that adopt MLOps ship better models faster. Furthermore, they spend less time fixing broken deployments.

[Internal Link: What is DevOps? A Beginner’s Guide to DevOps Principles]

MLOps vs DevOps — Key Differences

DevOps automates software delivery. MLOps automates model delivery. The two share many tools and principles. However, ML introduces unique challenges.

Key Differences Table

| Dimension | DevOps | MLOps |

|---|---|---|

| Artifacts | Code | Code + Model + Data |

| Testing | Unit, integration tests | Data validation + model accuracy tests |

| Versioning | Git for code | Git + DVC for code, data, and models |

| Monitoring | CPU, latency, errors | Data drift, prediction drift, accuracy |

| Retraining | N/A | Triggered by drift or schedule |

| Reproducibility | Code reproducibility | Full experiment reproducibility |

The Data Dependency Problem

In DevOps, code is your artifact. In MLOps, data is also an artifact. Changing the training data changes the model output. Therefore, you must version your datasets alongside your code.

Furthermore, models decay over time. Real-world data distributions shift. This phenomenon is called data drift. Specifically, your CI/CD pipeline must detect drift and trigger retraining automatically.

Experiment Tracking: A New Requirement

DevOps does not require experiment tracking. MLOps absolutely does. Every training run should log:

- Hyperparameters used

- Dataset version and hash

- Evaluation metrics (accuracy, F1, AUC-ROC)

- Model artifact location

Tools like MLflow and Weights & Biases handle this automatically. Additionally, they integrate directly into your MLOps CI/CD pipeline.

[Internal Link: Top 10 DevOps Automation Tools for Engineering Teams in 2025]

Building an MLOps Pipeline Architecture

Before writing code, you need a clear architecture. A production-ready MLOps CI/CD pipeline has five core stages.

MLOps Pipeline: ASCII Architecture Diagram

┌─────────────────────────────────────────────────────────────────────────┐

│ MLOps CI/CD Pipeline Architecture │

├─────────────────────────────────────────────────────────────────────────┤

│ │

│ [Developer Pushes Code] │

│ │ │

│ ▼ │

│ ┌─────────────┐ ┌─────────────┐ ┌──────────────┐ │

│ │ GitHub │────▶│ GitHub │────▶│ Data │ │

│ │ Repository │ │ Actions │ │ Validation │ │

│ └─────────────┘ └─────────────┘ └──────────────┘ │

│ │ │ │

│ ▼ ▼ │

│ ┌─────────────────┐ ┌──────────────┐ │

│ │ Model Training │ │ DVC Pull │ │

│ │ (SageMaker / │◀──│ Data from │ │

│ │ Azure ML) │ │ S3 / GCS │ │

│ └─────────────────┘ └──────────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Model Testing │ │

│ │ & Validation │ │

│ │ (Accuracy Gate)│ │

│ └─────────────────┘ │

│ │ │

│ ┌───────────────┴────────────────┐ │

│ │ Pass Fail │ │

│ ▼ ▼ │

│ ┌─────────────────┐ ┌──────────────────┐ │

│ │ Deploy Model │ │ Alert Team │ │

│ │ (SageMaker / │ │ Block Merge │ │

│ │ Azure ML / │ └──────────────────┘ │

│ │ GKE) │ │

│ └─────────────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Production │ │

│ │ Monitoring │ │

│ │ (Evidently + │ │

│ │ Grafana) │ │

│ └─────────────────┘ │

│ │

└─────────────────────────────────────────────────────────────────────────┘The Five Stages

- Source Stage — Code and data change triggers the pipeline

- Build Stage — Install dependencies and run data validation

- Train Stage — Automated model training runs on cloud compute

- Test Stage — Model quality gates check accuracy and performance

- Deploy Stage — Passing models are deployed to production endpoints

Next, let us implement each stage step by step.

Step 1: Model Versioning With DVC

DVC (Data Version Control) is the Git for ML data and models. It integrates seamlessly with your Git repository. However, it stores large files in cloud storage (S3, GCS, or Azure Blob). ⚡

Why DVC Is Essential for MLOps CI/CD

Without DVC, you cannot reproduce experiments. You cannot track which dataset trained which model. Additionally, you cannot roll back to a previous model version safely.

Setting Up DVC

# Install DVC with S3 support

pip install dvc[s3]

# Initialize DVC in your project

dvc init

# Set up remote storage (AWS S3)

dvc remote add -d myremote s3://your-ml-bucket/dvc-store

dvc remote modify myremote region us-east-1

# Track your dataset

dvc add data/training_data.csv

# Push data to remote

dvc push

Tracking Model Artifacts

# After training, track the model file

dvc add models/classifier.pkl

# Create a DVC pipeline stage

dvc run -n train \

-d data/training_data.csv \

-d src/train.py \

-o models/classifier.pkl \

python src/train.py

DVC Pipeline File (dvc.yaml)

stages:

preprocess:

cmd: python src/preprocess.py

deps:

- src/preprocess.py

- data/raw/dataset.csv

outs:

- data/processed/train.csv

- data/processed/test.csv

train:

cmd: python src/train.py

deps:

- src/train.py

- data/processed/train.csv

outs:

- models/classifier.pkl

metrics:

- metrics/scores.json:

cache: false

evaluate:

cmd: python src/evaluate.py

deps:

- src/evaluate.py

- models/classifier.pkl

- data/processed/test.csv

metrics:

- metrics/eval_scores.json:

cache: false

Furthermore, DVC generates a dvc.lock file. This file locks the exact dataset and model versions used. Therefore, anyone on your team can reproduce the exact same experiment.

[Internal Link: How to Set Up GitHub Actions CI/CD for Python Projects]

Step 2: Automated MLOps CI/CD Training Pipeline

GitHub Actions is the most popular CI/CD tool for MLOps CI/CD workflows. It is free for public repos. Additionally, it integrates natively with AWS, Azure, and GCP through marketplace actions. 💡

Project Repository Structure

ml-project/

├── .github/

│ └── workflows/

│ ├── train.yml # Training pipeline

│ └── deploy.yml # Deployment pipeline

├── src/

│ ├── train.py

│ ├── evaluate.py

│ └── preprocess.py

├── models/

├── data/

├── terraform/

│ ├── main.tf

│ └── variables.tf

├── dvc.yaml

├── dvc.lock

├── params.yaml

└── requirements.txt

GitHub Actions Training Workflow

# .github/workflows/train.yml

name: ML Training Pipeline

on:

push:

branches: [main]

paths:

- 'src/**'

- 'data/**'

- 'params.yaml'

- 'dvc.yaml'

jobs:

train:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Set up Python

uses: actions/setup-python@v4

with:

python-version: '3.10'

- name: Install dependencies

run: |

pip install -r requirements.txt

pip install dvc[s3]

- name: Configure AWS credentials

uses: aws-actions/configure-aws-credentials@v2

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: us-east-1

- name: Pull data with DVC

run: dvc pull

- name: Run DVC pipeline

run: dvc repro

- name: Push model artifacts

run: dvc push

- name: Upload metrics

uses: actions/upload-artifact@v3

with:

name: metrics

path: metrics/

Training Script (src/train.py)

import pandas as pd

import json

import joblib

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score, f1_score

import yaml

def train():

# Load parameters

with open("params.yaml") as f:

params = yaml.safe_load(f)

# Load data

df = pd.read_csv("data/processed/train.csv")

X = df.drop("target", axis=1)

y = df["target"]

X_train, X_val, y_train, y_val = train_test_split(

X, y, test_size=0.2, random_state=params["seed"]

)

# Train model

model = RandomForestClassifier(

n_estimators=params["n_estimators"],

max_depth=params["max_depth"],

random_state=params["seed"]

)

model.fit(X_train, y_train)

# Evaluate

val_preds = model.predict(X_val)

accuracy = accuracy_score(y_val, val_preds)

f1 = f1_score(y_val, val_preds, average="weighted")

# Save metrics

metrics = {"accuracy": accuracy, "f1_score": f1}

with open("metrics/scores.json", "w") as f:

json.dump(metrics, f)

# Save model

joblib.dump(model, "models/classifier.pkl")

print(f"Training complete. Accuracy: {accuracy:.4f}, F1: {f1:.4f}")

if __name__ == "__main__":

train()

Additionally, store hyperparameters in params.yaml. This way, DVC tracks parameter changes automatically. Consequently, any parameter change triggers a fresh training run.

Step 3: MLOps CI/CD Model Testing and Validation

Deploying an untested model is dangerous. Your MLOps CI/CD pipeline must include automated quality gates. These gates block deployment if the model fails accuracy thresholds.

Types of ML Model Tests

| Test Type | What It Checks | Tool |

|---|---|---|

| Data Validation | Schema, nulls, distributions | Great Expectations, Pandera |

| Model Accuracy Gate | Accuracy, F1, AUC above threshold | Custom script, pytest |

| Regression Test | New model vs. baseline model | MLflow, custom script |

| Latency Test | Inference time under limit | Locust, custom script |

| Bias Test | Fairness across demographic groups | Fairlearn, AIF360 |

Data Validation With Pandera

# src/validate_data.py

import pandas as pd

import pandera as pa

from pandera import Column, DataFrameSchema, Check

schema = DataFrameSchema({

"feature_1": Column(float, Check.between(0, 1)),

"feature_2": Column(float, Check.ge(0)),

"feature_3": Column(int, Check.isin([0, 1, 2, 3])),

"target": Column(int, Check.isin([0, 1]))

})

def validate(data_path: str):

df = pd.read_csv(data_path)

try:

schema.validate(df)

print("✅ Data validation passed")

return True

except pa.errors.SchemaError as e:

print(f"❌ Data validation failed: {e}")

return False

if __name__ == "__main__":

valid = validate("data/processed/train.csv")

exit(0 if valid else 1)

Model Quality Gate

# src/evaluate.py

import json

import sys

ACCURACY_THRESHOLD = 0.85

F1_THRESHOLD = 0.82

def check_quality_gate():

with open("metrics/scores.json") as f:

metrics = json.load(f)

accuracy = metrics["accuracy"]

f1 = metrics["f1_score"]

print(f"Accuracy: {accuracy:.4f} (threshold: {ACCURACY_THRESHOLD})")

print(f"F1 Score: {f1:.4f} (threshold: {F1_THRESHOLD})")

if accuracy < ACCURACY_THRESHOLD or f1 < F1_THRESHOLD:

print("❌ Model failed quality gate. Blocking deployment.")

sys.exit(1)

else:

print("✅ Model passed quality gate. Proceeding to deploy.")

sys.exit(0)

if __name__ == "__main__":

check_quality_gate()

Therefore, if metrics fall below thresholds, the CI/CD pipeline fails. Specifically, GitHub Actions marks the job as failed. The deployment job never runs. This prevents bad models from reaching production.

Step 4: MLOps CI/CD Deploy to AWS SageMaker or Azure ML

Once your model passes quality gates, deploy it automatically. We show both AWS SageMaker and Azure ML deployment workflows.

Option A: Deploy to AWS SageMaker

SageMaker is AWS’s managed ML platform. It handles model hosting, scaling, and endpoint management. Furthermore, it integrates natively with IAM, S3, and CloudWatch. 🛡️

# src/deploy_sagemaker.py

import boto3

import joblib

import tarfile

import os

def create_model_archive():

"""Package model for SageMaker deployment."""

with tarfile.open("model.tar.gz", "w:gz") as tar:

tar.add("models/classifier.pkl", arcname="classifier.pkl")

tar.add("src/inference.py", arcname="inference.py")

def upload_to_s3(bucket: str, key: str):

"""Upload model archive to S3."""

s3 = boto3.client("s3")

s3.upload_file("model.tar.gz", bucket, key)

return f"s3://{bucket}/{key}"

def deploy_to_sagemaker(model_uri: str, role_arn: str):

"""Create SageMaker endpoint."""

sm = boto3.client("sagemaker")

model_name = "ml-classifier-v1"

endpoint_config_name = f"{model_name}-config"

endpoint_name = f"{model_name}-endpoint"

# Create model

sm.create_model(

ModelName=model_name,

PrimaryContainer={

"Image": "683313688378.dkr.ecr.us-east-1.amazonaws.com/sagemaker-scikit-learn:1.0-1",

"ModelDataUrl": model_uri,

"Environment": {

"SAGEMAKER_PROGRAM": "inference.py"

}

},

ExecutionRoleArn=role_arn

)

# Create endpoint config

sm.create_endpoint_config(

EndpointConfigName=endpoint_config_name,

ProductionVariants=[{

"VariantName": "primary",

"ModelName": model_name,

"InstanceType": "ml.t2.medium",

"InitialInstanceCount": 1

}]

)

# Create endpoint

sm.create_endpoint(

EndpointName=endpoint_name,

EndpointConfigName=endpoint_config_name

)

print(f"✅ Deployed to SageMaker endpoint: {endpoint_name}")

if __name__ == "__main__":

create_model_archive()

model_uri = upload_to_s3("your-ml-bucket", "models/model.tar.gz")

deploy_to_sagemaker(model_uri, role_arn="arn:aws:iam::123456789:role/SageMakerRole")

Option B: Deploy to Azure ML

# src/deploy_azure.py

from azure.ai.ml import MLClient

from azure.ai.ml.entities import (

ManagedOnlineEndpoint,

ManagedOnlineDeployment,

Model,

Environment,

CodeConfiguration

)

from azure.identity import DefaultAzureCredential

def deploy_to_azure(

subscription_id: str,

resource_group: str,

workspace: str

):

"""Deploy model to Azure ML managed endpoint."""

credential = DefaultAzureCredential()

ml_client = MLClient(

credential, subscription_id, resource_group, workspace

)

# Register model

model = ml_client.models.create_or_update(

Model(

name="ml-classifier",

path="models/classifier.pkl",

type="custom_model",

description="Automated CI/CD deployment"

)

)

# Create endpoint

endpoint = ManagedOnlineEndpoint(

name="ml-classifier-endpoint",

auth_mode="key"

)

ml_client.online_endpoints.begin_create_or_update(endpoint).result()

# Create deployment

deployment = ManagedOnlineDeployment(

name="primary",

endpoint_name="ml-classifier-endpoint",

model=model,

environment=Environment(

image="mcr.microsoft.com/azureml/sklearn-1.0-ubuntu20.04-py38-cpu-inference"

),

code_configuration=CodeConfiguration(

code="src/",

scoring_script="inference.py"

),

instance_type="Standard_DS2_v2",

instance_count=1

)

ml_client.online_deployments.begin_create_or_update(deployment).result()

print("✅ Deployed to Azure ML endpoint successfully.")

Deployment GitHub Actions Workflow

# .github/workflows/deploy.yml

name: ML Deployment Pipeline

on:

workflow_run:

workflows: ["ML Training Pipeline"]

types: [completed]

jobs:

deploy:

if: ${{ github.event.workflow_run.conclusion == 'success' }}

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Set up Python

uses: actions/setup-python@v4

with:

python-version: '3.10'

- name: Install dependencies

run: pip install -r requirements.txt

- name: Configure AWS credentials

uses: aws-actions/configure-aws-credentials@v2

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: us-east-1

- name: Download artifacts

uses: actions/download-artifact@v3

with:

name: metrics

- name: Run quality gate check

run: python src/evaluate.py

- name: Deploy to SageMaker

run: python src/deploy_sagemaker.py

env:

SAGEMAKER_ROLE: ${{ secrets.SAGEMAKER_ROLE_ARN }}

Step 5: Monitoring in Production

Deploying a model is not the finish line. Production models need continuous monitoring. Without monitoring, you will not know when a model starts failing.

What to Monitor in Production

| Metric Type | Examples | Why It Matters |

|---|---|---|

| Data Drift | Feature distribution shift | Input data changed from training data |

| Prediction Drift | Output label distribution shift | Model predictions are changing |

| Model Accuracy | Accuracy, F1, AUC (when labels available) | Measures real-world performance |

| Infrastructure | Latency, error rate, CPU/RAM | Operational health of endpoint |

| Business KPIs | Revenue impact, conversion rate | Ties model to business outcomes |

Setting Up Evidently AI for Drift Detection

Evidently AI is the leading open-source library for ML monitoring. It detects both data drift and prediction drift automatically.

# src/monitor.py

import pandas as pd

from evidently.report import Report

from evidently.metric_preset import DataDriftPreset, ClassificationPreset

from evidently.metrics import DataDriftTable

def run_drift_report(reference_path: str, production_path: str):

"""Generate drift report comparing reference vs production data."""

reference_data = pd.read_csv(reference_path)

production_data = pd.read_csv(production_path)

report = Report(metrics=[

DataDriftPreset(),

ClassificationPreset()

])

report.run(

reference_data=reference_data,

current_data=production_data

)

report.save_html("monitoring/drift_report.html")

results = report.as_dict()

drift_detected = results["metrics"][0]["result"]["dataset_drift"]

if drift_detected:

print("⚠️ Data drift detected! Triggering retraining pipeline...")

trigger_retraining()

else:

print("✅ No significant drift detected.")

def trigger_retraining():

"""Trigger GitHub Actions retraining workflow via API."""

import requests

import os

token = os.environ["GITHUB_TOKEN"]

repo = "your-org/ml-project"

url = f"https://api.github.com/repos/{repo}/dispatches"

headers = {

"Authorization": f"Bearer {token}",

"Accept": "application/vnd.github+json"

}

payload = {"event_type": "retrain-model"}

response = requests.post(url, headers=headers, json=payload)

print(f"Retraining triggered. Status: {response.status_code}")

if __name__ == "__main__":

run_drift_report(

"data/reference/train.csv",

"data/production/current_batch.csv"

)

Additionally, connect Evidently to Grafana for real-time dashboards. Specifically, Evidently exports metrics to Prometheus format. Grafana then visualizes them in live dashboards. Consequently, your team gets instant visibility into model health.

[Internal Link: How to Set Up Grafana and Prometheus for DevOps Monitoring]

Terraform for MLOps CI/CD Infrastructure

Infrastructure as Code (IaC) is a core MLOps requirement. Terraform lets you define your entire ML infrastructure in code. It is repeatable, versioned, and cloud-agnostic. ☁️

Why Terraform for MLOps CI/CD

- Reproduce environments across dev, staging, and production

- Version your infrastructure changes in Git

- Rollback infrastructure just like code

- Prevent configuration drift between environments

Terraform for AWS SageMaker

# terraform/main.tf

terraform {

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 5.0"

}

}

backend "s3" {

bucket = "your-terraform-state-bucket"

key = "mlops/terraform.tfstate"

region = "us-east-1"

}

}

provider "aws" {

region = var.aws_region

}

# S3 bucket for ML artifacts

resource "aws_s3_bucket" "ml_artifacts" {

bucket = "${var.project_name}-ml-artifacts-${var.environment}"

tags = {

Environment = var.environment

Project = var.project_name

ManagedBy = "Terraform"

}

}

resource "aws_s3_bucket_versioning" "ml_artifacts" {

bucket = aws_s3_bucket.ml_artifacts.id

versioning_configuration {

status = "Enabled"

}

}

# IAM Role for SageMaker

resource "aws_iam_role" "sagemaker_role" {

name = "${var.project_name}-sagemaker-role"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = {

Service = "sagemaker.amazonaws.com"

}

}]

})

}

resource "aws_iam_role_policy_attachment" "sagemaker_full_access" {

role = aws_iam_role.sagemaker_role.name

policy_arn = "arn:aws:iam::aws:policy/AmazonSageMakerFullAccess"

}

resource "aws_iam_role_policy_attachment" "s3_access" {

role = aws_iam_role.sagemaker_role.name

policy_arn = "arn:aws:iam::aws:policy/AmazonS3FullAccess"

}

# ECR Repository for custom Docker images

resource "aws_ecr_repository" "ml_training_image" {

name = "${var.project_name}-training"

image_tag_mutability = "MUTABLE"

image_scanning_configuration {

scan_on_push = true

}

}

# CloudWatch Log Group for ML pipeline logs

resource "aws_cloudwatch_log_group" "ml_pipeline_logs" {

name = "/mlops/${var.project_name}"

retention_in_days = 30

}

# Outputs

output "s3_bucket_name" {

value = aws_s3_bucket.ml_artifacts.bucket

}

output "sagemaker_role_arn" {

value = aws_iam_role.sagemaker_role.arn

}

Terraform Variables

# terraform/variables.tf

variable "aws_region" {

description = "AWS region for all resources"

type = string

default = "us-east-1"

}

variable "project_name" {

description = "Name of the ML project"

type = string

default = "ml-classifier"

}

variable "environment" {

description = "Deployment environment"

type = string

default = "production"

}

Terraform in Your CI/CD Pipeline

# Add to .github/workflows/deploy.yml

- name: Setup Terraform

uses: hashicorp/setup-terraform@v2

with:

terraform_version: "1.6.0"

- name: Terraform Init

run: terraform init

working-directory: ./terraform

- name: Terraform Plan

run: terraform plan -out=tfplan

working-directory: ./terraform

- name: Terraform Apply

run: terraform apply tfplan

working-directory: ./terraform

Before: Teams manually created S3 buckets, IAM roles, and SageMaker configs. Each environment was slightly different. Debugging was painful and time-consuming.

After: Terraform creates identical, version-controlled infrastructure across all environments. Every change is tracked in Git. Rollbacks take seconds.

[Internal Link: Terraform for Beginners: Infrastructure as Code on AWS and Azure]

Real-World Case Study: Automating ML Deployment for a FinTech Company

The Problem (Before MLOps CI/CD)

A mid-sized FinTech company was running a fraud detection model. Here is what their workflow looked like before MLOps:

- Data scientists trained models locally

- Models were manually copied to production servers via FTP

- No automated testing — bad models went live regularly

- Retraining happened quarterly (manual effort, 2-3 days)

- Model performance degraded silently for weeks

Result: A bad model update caused $1.2M in missed fraud in one quarter.

The Solution (After MLOps CI/CD)

Devolity Business Solutions implemented a full MLOps pipeline:

- DVC versioned all training data and model artifacts in S3

- GitHub Actions triggered automated training on every data update

- Quality gates blocked any model below 92% AUC-ROC

- SageMaker hosted production endpoints with autoscaling

- Evidently monitored drift daily and triggered automatic retraining

- Terraform managed all AWS infrastructure as code

Results After 90 Days:

| Metric | Before MLOps | After MLOps |

|---|---|---|

| Deployment time | 3-5 days (manual) | 45 minutes (automated) |

| Model accuracy | 88% AUC (degraded) | 94% AUC (maintained) |

| Retraining frequency | Quarterly | Weekly (automated) |

| Failed deployments | 6 per month | 0 per month |

| Drift detection | None | Real-time (Evidently) |

| Infrastructure setup | 2 days per env | 15 minutes (Terraform) |

Consequently, fraud losses dropped by 31% in the first quarter after implementation.

Troubleshooting Guide

Common MLOps CI/CD issues and how to resolve them:

| Symptom | Root Cause | Solution | Prevention |

|---|---|---|---|

Pipeline fails on dvc pull | Missing AWS credentials or DVC remote misconfigured | Check AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY in GitHub Secrets. Verify dvc remote list points to correct S3 bucket | Store all credentials as GitHub Secrets; run dvc status before push |

| Model accuracy drops suddenly in production | Data drift — production data distribution changed | Run Evidently drift report; compare production data schema to training data | Set up daily drift monitoring with automated alerts on Slack/email |

| SageMaker endpoint returns 500 errors | Inference script error or missing dependency in Docker image | Check CloudWatch logs at /aws/sagemaker/Endpoints/; review inference.py exception handling | Unit test inference code locally; add try/except in model_fn and predict_fn |

| Terraform apply fails with state lock error | A previous terraform apply crashed and left a state lock in S3 DynamoDB table | Run terraform force-unlock <lock-id> to remove the lock manually | Use a dedicated DynamoDB table for state locking; set up CI/CD to auto-unlock after failures |

| GitHub Actions runner runs out of memory during training | Large dataset loaded into memory on free GitHub-hosted runner (2GB RAM) | Use self-hosted runner or SageMaker Training Job for large workloads | Offload training to cloud compute; use SageMaker or Vertex AI training jobs in CI/CD |

| Model artifact not found after deployment | DVC push was skipped or failed silently in the pipeline | Check DVC push step output in GitHub Actions logs; ensure dvc push runs after dvc repro | Add explicit dvc push step with error checking; use dvc status to validate before push |

| Retraining triggered too frequently | Evidently drift thresholds set too sensitive | Tune stattest_threshold in Evidently config to a higher value (e.g., 0.1 to 0.2) | Monitor false positive drift alerts; tune thresholds based on historical drift patterns |

How Devolity Business Solutions Builds MLOps Pipelines

Building a production-grade MLOps CI/CD pipeline is complex. It requires deep expertise across cloud platforms, DevOps tooling, and machine learning engineering. This is exactly where Devolity Business Solutions excels.

Devolity’s certified MLOps engineers have deployed end-to-end ML pipelines for enterprises across FinTech, healthcare, retail, and logistics. Their team holds AWS Machine Learning Specialty, Azure AI Engineer, and HashiCorp Terraform certifications. Furthermore, they have implemented automated ML deployment workflows that reduced model release cycles by 80% on average.

Devolity’s MLOps engagements cover the full stack. Specifically, they design DVC-based data versioning systems, build GitHub Actions CI/CD pipelines, configure SageMaker and Azure ML endpoints, set up Terraform infrastructure automation, and deploy Evidently-based monitoring dashboards.

What sets Devolity apart:

- 🚀 Speed: Pipeline setup delivered in 2-4 weeks, not months

- 🛡️ Security: IAM policies and network isolation built in from day one

- ☁️ Multi-cloud: AWS, Azure, and GCP support in a single pipeline

- ⚡ Automation: Zero manual steps from commit to production

Additionally, Devolity provides ongoing model performance monitoring and quarterly pipeline audits. Their teams have handled pipelines processing over 10 million predictions per day.

Ready to build your MLOps pipeline the right way? Contact Devolity Business Solutions to schedule a free MLOps architecture review.

Conclusion

Building a production-ready MLOps CI/CD pipeline is no longer optional. It is essential for any team serious about machine learning in production. Here are the five key takeaways from this guide:

- ✅ DVC versions your data and models alongside your code in Git

- ✅ GitHub Actions automates training, testing, and deployment triggers

- ✅ Quality gates block bad models before they ever reach production

- ✅ SageMaker or Azure ML provides managed, scalable model hosting

- ✅ Terraform makes your ML infrastructure reproducible and version-controlled

Furthermore, monitoring is not optional. Set up Evidently drift detection from day one. Consequently, you will catch model degradation before it affects your users or your business.

Your next step: Start with DVC and GitHub Actions. These two tools alone will transform your ML deployment workflow. Then layer in Terraform and production monitoring as you scale.

Connect with Devolity Business Solutions to accelerate your MLOps journey. Their team will review your current setup and design a custom MLOps pipeline for your organization.

Frequently Asked Questions

What is MLOps CI/CD?

MLOps CI/CD is the practice of applying continuous integration and continuous deployment principles to machine learning workflows. It automates model training, testing, and deployment. Every code or data change triggers the pipeline. The goal is reliable, repeatable model releases with zero manual steps. Tools like GitHub Actions, DVC, and SageMaker power most MLOps CI/CD setups.

How do you deploy ML models in production?

To deploy ML models in production, you need three things. First, package your model as a Docker image or serialized artifact. Second, upload it to a model registry (MLflow, SageMaker). Third, create a serving endpoint using SageMaker, Azure ML, or Kubernetes. Your MLOps CI/CD pipeline automates all three steps after a model passes quality gate testing.

What is the difference between MLOps and DevOps?

DevOps automates software code delivery. MLOps automates model delivery. The key difference is data. ML models depend on data, not just code. Therefore, MLOps adds data versioning, experiment tracking, and model monitoring to standard DevOps practices. Additionally, MLOps includes model drift detection and automated retraining, which have no equivalent in traditional DevOps.

How do you use GitHub Actions for machine learning?

Use GitHub Actions to trigger training pipelines on code or data changes. Create YAML workflow files in .github/workflows/. Use DVC to pull versioned datasets inside the workflow. Run training, evaluation, and quality gate scripts as pipeline steps. Finally, call a deployment script or Terraform apply to push passing models to production. Set workflow_run triggers to chain training and deployment pipelines.

What is DVC in machine learning?

DVC stands for Data Version Control. It works like Git but for large ML files: datasets, trained models, and pipeline artifacts. DVC stores file metadata in Git while keeping large files in remote storage (S3, GCS, Azure Blob). It enables full experiment reproducibility. Any team member can dvc pull and reproduce an exact training run from months ago.

How do you monitor ML models in production?

Monitor ML models by tracking data drift, prediction drift, and accuracy metrics. Use Evidently AI to compare incoming production data against training data distributions. Set drift thresholds that trigger automated retraining pipelines when exceeded. Connect Evidently metrics to Prometheus and Grafana for live dashboards. Additionally, set up CloudWatch or Azure Monitor alerts for endpoint latency and error rates.

How does Terraform help with ML infrastructure?

Terraform automates the creation of cloud resources needed for MLOps. It provisions S3 buckets, IAM roles, SageMaker endpoints, ECR repositories, and networking resources. All infrastructure is defined as code, versioned in Git, and reproducible across environments. Consequently, your dev, staging, and production environments are always identical. Terraform also enables fast rollbacks if an infrastructure change causes issues.

Transform Business with Cloud

Devolity simplifies state management with automation, strong security, and detailed auditing.