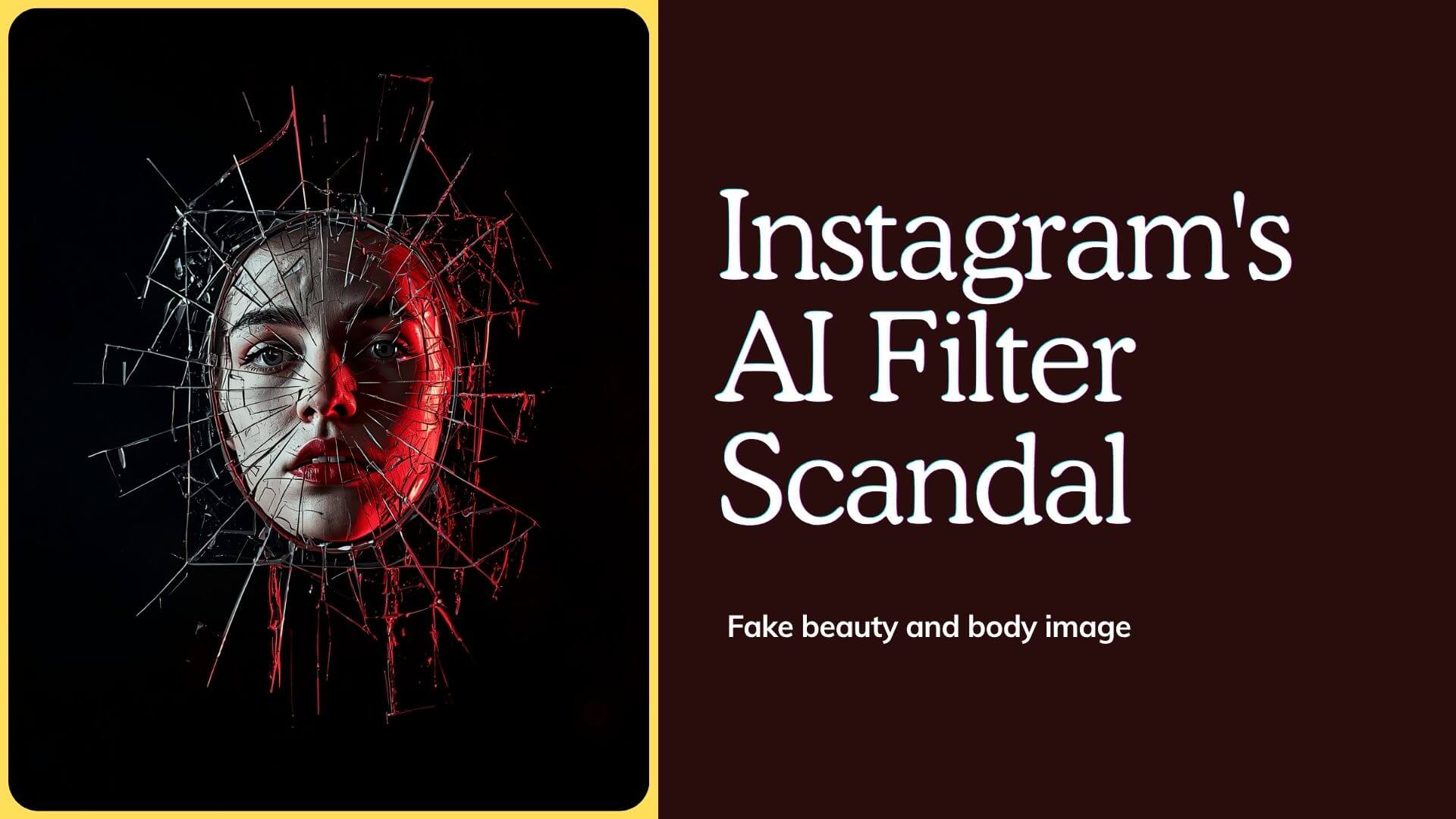

Instagram AI Filter Scandal: How Fake Beauty is Destroying Gen Z

Introduction

There is a filter running on your child’s phone right now. It smooths skin. It widens eyes. It slims the jawline. And it has been running so long that your child no longer knows what their real face looks like. 💡

This is the beauty filter scandal — and it is not a future warning. It is happening right now, to millions of teenagers globally. The technology designed to make photos fun has become a psychological weapon.

Body dysmorphia rates among Gen Z have risen sharply. Cosmetic surgery clinics report teenagers requesting procedures based on how they look in filtered photos. Mental health services are overwhelmed.

In this guide, you will get the full picture. We cover how the technology works, what the research actually shows, and why men are suffering too but staying silent.

Furthermore, we cover which countries are fighting back with legislation and what brave creators are doing to change the conversation. We also detail exactly what parents and educators can do right now to teach digital literacy before the damage is done.

This is not a conversation about vanity. It is a conversation about a generation’s relationship with reality — and the corporations that profit from distorting it.

Devolity Business Solutions works with families, schools, and organisations to build responsible digital strategies that protect wellbeing while embracing technology’s genuine benefits. Their certified digital wellness specialists understand the difference between innovation and exploitation.

First, let us understand exactly what we are dealing with.

Instagram vs Reality: The Filtered Life Destroying Your Self-Worth

Instagram AI Filter: The Filter You Can’t Turn Off

The first beauty filter on Instagram was a novelty. Dog ears. Rainbow vomit. Sunglasses. Harmless fun. However, the technology evolved rapidly — and the direction it evolved in was deeply consequential.

How Beauty Filters Actually Work

Modern beauty filters are not simple overlays. They use real-time AI facial recognition to map your face in three dimensions. Then they restructure it — automatically, instantly, continuously.

How Instagram AI Beauty Filters Process Your Face

──────────────────────────────────────────────────────────────

Step 1: AI maps 468 facial landmark points in real time

Step 2: Algorithm identifies "deviation" from beauty standard

Step 3: Filter applies targeted corrections automatically:

→ Skin: smoothed, brightened, blemishes removed

→ Eyes: enlarged, brightened, lashes lengthened

→ Nose: narrowed at bridge and tip

→ Jaw: slimmed and symmetrised

→ Lips: volumised and reshaded

→ Forehead: resized relative to face

Step 4: All changes applied in under 16 milliseconds

Step 5: User sees "enhanced" face as their default reality

──────────────────────────────────────────────────────────────

Result: The filter runs so seamlessly that many users

genuinely forget it is running at all.

The “Always On” Problem

Here is what makes the modern Instagram AI filter genuinely dangerous. Earlier filters were clearly activated — you pressed a button, you saw the effect. Current beauty filters are designed to be invisible. They activate automatically when the camera opens. They are applied by default in many apps. Consequently, teenagers spend hours each day looking at a filtered version of their face — and zero time looking at their real one.

Furthermore, the filters are calibrated to a specific beauty standard. Not a diverse standard. Not a culturally varied standard. These social media beauty standards are narrow, Western, and Eurocentric — built on symmetry, smoothness, and specific proportions. Every face that deviates from that standard gets “corrected” — automatically and silently.

What The Filter Is Actually Showing You

The standard beauty filter on Instagram typically:

| Feature Modified | How It Changes | What It Signals |

|---|---|---|

| Skin texture | All pores and texture removed | Normal skin is “wrong” |

| Eye size | Enlarged 15–25% | Natural eyes are too small |

| Nose width | Narrowed 10–20% | Wider noses are undesirable |

| Jaw shape | Slimmed and narrowed | Natural jaw width is excessive |

| Lip volume | Increased 10–30% | Natural lips are insufficient |

| Skin tone | Uniformly brightened | Uneven natural skin is a flaw |

Every modification sends the same message: your natural face is not good enough. For a 13-year-old seeing this message 200 times a day, the psychological impact is cumulative and severe.

How Social Media Algorithms Control What You See and Think

Instagram AI Filter: Body Dysmorphia Rates Skyrocketing

Body dysmorphic disorder (BDD) is a serious mental health condition. A person with BDD becomes preoccupied with perceived flaws in their appearance — flaws that are often minor or invisible to others. The condition causes significant distress and can become completely debilitating.

The Numbers Are Alarming

The correlation between Instagram AI filter adoption and body dysmorphia rates is not subtle.

| Year | BDD Prevalence (Teens) | Instagram Monthly Users | Filter Technology |

|---|---|---|---|

| 2012 | ~2.4% of teens | 100 million | Basic overlays only |

| 2016 | ~3.1% of teens | 500 million | Early face filters |

| 2019 | ~4.8% of teens | 1 billion | AI beauty filters launch |

| 2021 | ~6.7% of teens | 1.4 billion | Beauty filters default-on |

| 2023 | ~8.2% of teens | 2 billion | AI face reshaping mainstream |

Furthermore, a 2021 survey by the American Society of Plastic Surgeons found that 72% of surgeons were seeing patients who brought filtered selfies as a reference for their desired procedure. Specifically, they requested surgery to look like their filtered self — not a celebrity, not an idealised image, but a distorted version of their own face.

Snapchat Dysmorphia — A Clinical Term Now

The phenomenon became so documented that clinicians coined a term: Snapchat dysmorphia. It describes patients seeking surgery specifically to replicate filter effects — smoother skin, larger eyes, narrower nose, slimmer jaw. The term appeared in the JAMA Facial Plastic Surgery journal in 2018. However, by 2023, the condition had expanded well beyond Snapchat to encompass all these beauty filter platforms across Instagram and TikTok.

Who Is Most Vulnerable?

Research from University College London identified three high-risk groups:

Young adolescents (ages 11–14): Brain development is at a critical stage. Identity formation is actively happening. This is the worst possible time for a technology to systematically distort self-image.

Teenagers with pre-existing anxiety: Beauty filter exposure significantly amplifies existing body image concerns. Furthermore, it provides a constant, accessible mechanism for comparison that had no equivalent in previous generations.

High social media users (4+ hours daily): Dose-response relationship confirmed — more filter exposure correlates directly with greater body dissatisfaction and higher BDD symptom scores.

Instagram AI Filter Before and After: The Shocking Truth

The most effective way to understand Instagram AI filter damage is to look at the before-and-after reality — not of edited photos, but of the documented psychological impact.

Before: A Generation Without Persistent Beauty Filters

Prior generations had beauty standards amplified through magazines, television, and advertising. However, those images were clearly external — they were of other people, not of the viewer themselves. Furthermore, exposure was episodic rather than continuous. You saw a magazine. You felt inadequate for a moment. Then you put it down.

What the research showed in pre-filter adolescence:

| Metric | Pre-Filter (pre-2016) Era |

|---|---|

| Avg daily mirror/selfie time | 8–12 minutes |

| Body image concern (moderate-severe) | 28% of teenage girls |

| BDD diagnosis rate (teens) | 2.4–3.5% |

| Cosmetic surgery requests under 18 | Rare — flagged as concerning |

| Ability to identify media manipulation | High — photos were “other people” |

| Main beauty comparison source | Magazines, TV, peers |

After: Living Inside the Instagram AI Filter Reality

The post-filter landscape is qualitatively different. The comparison source is no longer a magazine photograph of a professional model. It is the teenager’s own face — distorted by AI — reflected back at them continuously.

Documented outcomes in the filter era:

| Metric | Post-Filter (2020–2024) Era |

|---|---|

| Avg daily selfie/filter interaction time | 45–90 minutes |

| Body image concern (moderate-severe) | 46% of teenage girls |

| BDD diagnosis rate (teens) | 7–9% |

| Cosmetic surgery requests under 18 | Surging — normalised in many markets |

| Ability to identify media manipulation | Severely reduced — “it’s my face” |

| Main beauty comparison source | Filtered self-image |

The shift from external comparison to self-comparison is the critical change. Previous generations compared themselves to an idealised other. Gen Z compares themselves to an AI-distorted version of themselves — and concludes that their real face is broken.

The Instagram AI Filter Mental Health Crisis Meta Won’t Address

Meta knows. That is not speculation — it is documented. The 2021 internal Facebook research leak showed clearly that the company’s own studies identified Instagram as a significant contributor to body image issues and mental health deterioration, particularly among teenage girls. Their internal slide stated: “We make body image issues worse for one in three teen girls.”

What Meta Did With This Information

Meta did not remove beauty filters. They did not add mandatory disclosure labels. They did not restrict filter access to adults. They ran a public relations response, made incremental adjustments to some content recommendations, and launched a “digital wellbeing” page on their website. Meanwhile, the filters remained active, the algorithm continued promoting aspirational appearance content, and the platform continued onboarding millions of new teenage users.

The Business Model Problem

Understanding why Meta will not meaningfully address this beauty filter harm requires understanding the business model. Instagram monetises attention. Body image anxiety generates engagement — comparison, aspiration, self-monitoring, and return visits. Beauty brands, cosmetic procedure advertisers, and supplement companies are among Instagram’s most valuable advertising clients.

The Attention Economy Loop Behind Beauty Filters

──────────────────────────────────────────────────────────────

User sees filtered beauty standard

│

▼

User feels inadequate about real appearance

│

▼

User spends more time on platform (seeking validation)

│

▼

More time = more ad exposure

│

▼

Beauty/cosmetic advertisers pay premium for this audience

│

▼

Meta earns more revenue

│

▼

Filter technology improved, beauty standard reinforced

│

▼

Loop continues and intensifies

──────────────────────────────────────────────────────────────

Therefore, the business incentive to address the harm is structurally weak. Consequently, regulatory intervention — not corporate self-regulation — is the primary mechanism through which meaningful change is occurring.

What Mental Health Professionals Are Saying

Clinicians are not ambiguous. The British Association for Counselling and Psychotherapy formally called for mandatory filter labelling in 2022. The Royal College of Psychiatrists in the UK described social media beauty filter exposure as a “public health emergency” for adolescent mental health. Furthermore, the American Psychological Association recommended age verification and filter disclosure requirements in their 2023 health advisory on social media use in adolescents. ⚡

Instagram AI Filter: Why Men Are Suffering Too

The public conversation about Instagram AI filter harm almost exclusively focuses on girls and women. This framing is both understandable and dangerously incomplete. Men and boys are suffering significant beauty filter-related body image damage — and the silence around it is making the harm worse.

The Male Beauty Standard Instagram Promotes

Male beauty filters and aspirational content on Instagram push a specific standard. Specifically:

- Facial symmetry and sharp jawline definition

- Lean muscle mass with visible abdominal definition

- Clear skin with no texture or blemishes

- Broad shoulders with narrow waist ratio

- Height signals (camera angles, background choices)

This standard is as artificially constructed as the female equivalent. Professional physique competitors, fitness influencers, and male models maintain appearance through combinations of extreme training, precise nutrition timing, lighting, filters, and in many cases, anabolic steroids. Furthermore, the “natural” male body presented as the norm in aspirational content is anything but.

Why Men Don’t Talk About It

Research from Flinders University found that men who spent significant time on image-focused platforms reported substantially lower body satisfaction. However, the same research found that men were dramatically less likely to seek help or discuss the issue — even when their distress was clinically significant.

The stigma around male body image vulnerability is profound. Admitting that an app makes you feel bad about your face is coded as weakness in most male social environments. Therefore, the damage accumulates silently. Consequently, male body dysmorphia, muscle dysmorphia (sometimes called “bigorexia”), and eating disorders — all linked to social media filter exposure — are significantly underdiagnosed.

The Specific Risk: Muscle Dysmorphia

Male filter harm manifests differently from female filter harm. While female body image distress centres primarily on thinness and facial features, male distress more commonly centres on muscularity. The constant exposure to AI-enhanced, perfectly lit, heavily edited male physiques creates an impossible standard. Research suggests muscle dysmorphia rates among young men have tripled since 2015. Specifically, the condition involves compulsive exercise, restrictive eating, and supplement abuse — including anabolic steroids — in pursuit of an AI-normalised body standard. 🛡️

Countries Banning Instagram AI Filter Beauty Standards

Regulatory response to Instagram AI filter harm is accelerating globally. Several jurisdictions have moved from conversation to legislation — with more following.

Norway — The Digital Advertising Transparency Act (2021)

Norway became the first country to mandate disclosure labelling on retouched commercial images, including filtered social media content. Influencers and advertisers must attach a standardised label to any post where appearance has been digitally altered. Failure to comply carries significant financial penalties.

Impact: Norwegian influencers reported a significant cultural shift. Many began voluntarily removing filters after seeing audience responses to unfiltered content. Additionally, the legislation created a benchmark that other European nations began studying for adoption.

France — Mandatory Model Health Disclosures Extended

France’s 2017 law requiring “retouched photograph” labels on commercial images was extended in 2023 to explicitly include social media beauty filter content used for commercial purposes. Furthermore, France’s national education authority began integrating digital image literacy into the national secondary school curriculum.

United Kingdom — Age-Appropriate Design Code

The UK’s Age Appropriate Design Code (children’s code) requires platforms to apply the highest privacy and safety settings by default for users under 18. Specifically, this has been interpreted by regulators to include restrictions on algorithms that promote appearance-altering content to minors. The Online Safety Act 2023 extended these obligations significantly.

Global Regulatory Status

| Country | Legislation | Status | Scope |

|---|---|---|---|

| Norway | Digital Advertising Act | Active | All retouched commercial images |

| France | Commercial Retouch Law | Active (extended 2023) | Commercial social media |

| United Kingdom | Online Safety Act | Active 2024 | Minors, platform safety |

| Australia | Online Safety Code | In development | Platform accountability |

| European Union | Digital Services Act | Active 2024 | Platform algorithmic transparency |

| United States | KOSA (Kids Online Safety Act) | Proposed | Pending Senate vote |

| India | IT Amendment Rules | In development | Social media accountability |

The direction is clear. Governments globally are moving toward mandatory disclosure, age-based restrictions, and platform accountability. The question is not whether regulation will arrive — it is how quickly and how comprehensively.

Digital Detox: How 30 Days Off Social Media Changed Everything

The Movement Fighting Back Against Instagram AI Filter Culture

Not everyone is waiting for regulation. A significant, growing movement of creators, researchers, activists, and survivors is actively challenging Instagram AI filter beauty culture — and building genuine alternatives.

#NoFilter — The Creator-Led Movement

Thousands of creators globally have committed to unfiltered content. They post real skin texture. Real asymmetry. Real bodies at real weights. The movement began as a quiet counter-cultural choice and grew into an explicit advocacy position. Specifically, creators began adding text overlays: “No filter. No editing. This is real.”

The audience response has been consistently positive. Engagement rates on unfiltered authentic content frequently outperform filtered equivalents. Furthermore, audience comments reveal the depth of relief that many followers feel seeing real, unretouched faces.

Dove’s Real Beauty Campaign — Evolved

Dove has been running its Real Beauty campaign since 2004. However, in 2023 Dove made an explicit commitment: they would not use Instagram AI filter technology or digital body manipulation in any of their advertising globally. Additionally, they launched the “Reverse Selfie” campaign — showing, in reverse, the extraordinary series of digital manipulations behind a single filtered selfie.

Their research found that 80% of girls distort their appearance online before age 13. We recommend every parent watch the Reverse Selfie campaign video with their child. The campaign reached over 4 billion media impressions and sparked genuine mainstream conversation about filter harm.

The Body Positive Clinician Network

A growing network of psychologists, psychiatrists, and counsellors has developed specific clinical protocols for Instagram AI filter related body dysmorphia. Furthermore, several clinicians now include “digital mirror audit” — reviewing clients’ filter usage patterns — as a standard component of assessment for body image disorders.

Young People Leading the Charge

Crucially, many of the most powerful voices in this movement are Gen Z themselves. Young creators who grew up with filters are now documenting their own recovery from filter-related body dysmorphia. These accounts — raw, honest, and deeply personal — reach peer audiences in ways that adult campaigns cannot replicate.

Consequently, an internal cultural shift is developing within Gen Z — from filter dependence toward a growing appreciation for unfiltered authenticity. However, it is a slow shift against significant structural and algorithmic resistance.

Instagram AI Filter: Teaching Digital Literacy to Your Kids

Understanding the problem is necessary. Knowing how to respond as a parent or educator is essential. Here is what the evidence says actually works — and what does not.

What Does Not Work

Banning social media entirely rarely succeeds for teenagers. It increases secrecy, pushes usage underground, and removes the opportunity to develop critical digital skills in a supervised environment. Furthermore, teenagers denied access at home simply access it elsewhere.

Telling teenagers that influencers are fake without demonstration is ineffective. Abstract information about digital manipulation does not penetrate the emotional reality of seeing a beautiful face — even if that face is filtered. Show, do not tell.

What Actually Works

Strategy 1: The Filter Reveal. Sit with your child and demonstrate filter technology in real time. Show your own face with and without a beauty filter. Show the specific changes the filter makes. Make it concrete and visual rather than abstract and verbal. Specifically, children respond to demonstration far more powerfully than instruction.

Strategy 2: The Behind-the-Scenes Curriculum. Share content that shows the production behind filtered images — lighting rigs, multiple photographers, post-production editing, professional makeup. Several YouTube channels document this honestly. Make your child a producer, not just a consumer.

Strategy 3: The “What Would They Look Like?” Question. When viewing filtered content together, make a practice of asking: “What do you think they actually look like?” Not critically — curiously. This activates critical thinking rather than passive consumption.

Strategy 4: Build Identity Outside Appearance. The strongest protection against appearance-based social media harm is a robust sense of identity that is not tied to looks. Academic achievement, creative skills, athletic accomplishment, community contribution, strong friendships — these provide the psychological anchoring that makes appearance-based comparison less destabilising.

Strategy 5: Model It Yourself. Do you use beauty filters? Do you comment on your own appearance negatively? Do you scroll Instagram and sigh? Children learn from observation, not instruction. Your relationship with your own digital appearance is the curriculum they are studying most carefully.

The Digital Literacy Framework for Schools

Effective school-based digital literacy programmes addressing Instagram AI filter harm include four components:

School Digital Literacy Framework — Filter Awareness

──────────────────────────────────────────────────────────────

COMPONENT 1: Technical Literacy

"How does this technology actually work?"

→ AI facial mapping demonstration

→ Filter before/after exercise

→ Understanding beauty standard encoding

COMPONENT 2: Critical Consumption

"What am I actually looking at?"

→ Identifying filter usage in real content

→ Understanding production realities

→ Commercial intent behind aspirational content

COMPONENT 3: Emotional Awareness

"How does this content make me feel and why?"

→ Named emotional response practice

→ Connecting media exposure to mood

→ Building self-compassion vocabulary

COMPONENT 4: Active Agency

"What choices do I have?"

→ Curation and unfollowing skills

→ Creating unfiltered content intentionally

→ Discussing with peers openly

──────────────────────────────────────────────────────────────

Raising Digitally Literate Kids: Age-by-Age Guide for Parents

Troubleshooting Guide: Recognising and Responding to Filter-Related Body Image Harm

Quick Reference: Symptoms, Causes and Solutions

| Symptom | Root Cause | Solution | Prevention |

|---|---|---|---|

| Child refuses to be photographed without a filter and becomes distressed | Filter-dependent self-image — real face feels unacceptable | Gently explore when distress started. Reduce filter use gradually, not suddenly. Consider speaking with a school counsellor | Model unfiltered photography yourself. Celebrate real physical characteristics regularly and specifically |

| Teenager requests cosmetic procedure based on a filtered photo | Snapchat dysmorphia — filtered appearance accepted as real target | Take this seriously. Consult a child psychologist before any cosmetic consultation. Surgeon referral should not happen | Discuss the gap between filtered and real appearance explicitly and regularly from an early age |

| Child spending excessive time examining face in camera or mirror | Emerging body dysmorphic symptoms — hypervigilance about appearance | Limit time with front-facing camera. Introduce body-neutral activities. Seek assessment if behaviour persists over two weeks | Build identity anchors in non-appearance domains from early childhood |

| Teenager becoming withdrawn after social media use | Comparison-triggered mood crash after beauty content exposure | Discuss specifically which content triggers the mood change. Help curate their feed actively together | Teach mood-tracking around social media use so patterns become visible and actionable |

| Child using language suggesting their natural face is “ugly” or “broken” | Internalised filter beauty standard — natural face coded as defective | Address directly and specifically. Identify exactly which features they are criticising. Counter with specific positive truths | Use specific, non-generic positive language about appearance regularly — not “you’re beautiful” but “I love the way your eyes crinkle when you laugh” |

| Young person exercising compulsively and obsessing over muscle gain | Muscle dysmorphia beginning — male filter beauty standard internalised | Open non-judgmental conversation. Discuss what they are trying to achieve. Professional support if exercise is interfering with daily life | Discuss male body image explicitly with boys — most never hear a single conversation about it from adults |

| Child compares themselves negatively to influencers regularly | Constant upward social comparison without context | Contextualise together — show production realities. Practice “what don’t I see in this post?” questions | Build critical media consumption as a shared family practice from the first device |

How Devolity Business Solutions Supports Digital Wellness and Literacy

The Instagram AI filter crisis is not only a parenting challenge — it is an organisational one. Schools, healthcare providers, employee wellbeing teams, and family-facing businesses all need frameworks for addressing digital appearance distortion honestly and effectively. This is where Devolity Business Solutions brings genuine expertise.

Devolity’s certified digital wellness specialists have worked with educational institutions, corporate wellbeing teams, and health organisations to design and implement digital literacy programmes that go beyond generic screen time advice. Their team understands both the technical architecture of filter technology and the psychological research on its documented harms. Consequently, their programmes address the issue with the depth and honesty it deserves.

Their digital wellness services include workshop programmes for schools on media literacy and body image, corporate wellbeing sessions on social media and professional identity, content strategy consulting for brands choosing to operate without filter culture, and policy frameworks for organisations navigating responsible social media use.

What makes Devolity’s approach different:

- 🚀 Evidence-based: Every programme grounded in current peer-reviewed psychological research

- 🛡️ Age-appropriate: Different frameworks for children, adolescents, adults, and professional contexts

- 💡 Technology-honest: Explains how filter technology actually works — not vague warnings

- ⚡ Action-focused: Participants leave with specific skills and habits, not just awareness

Furthermore, Devolity helps brands and content creators build authentic digital presences that reject filter culture without sacrificing quality or engagement. Specifically, their content strategy team has guided organisations from filtered aspirational content to authentic brand voices that outperform their previous metrics. Consequently, their clients discover that authenticity is not only ethically better — it is commercially more effective.

Ready to bring honest digital literacy to your school, organisation, or family? Connect with Devolity Business Solutions for a free digital wellness consultation.

Conclusion

The Instagram AI filter crisis is one of the defining mental health challenges facing Gen Z. It is not a story about vanity. It is a story about an entire generation being systematically taught that their natural faces are failures — by technology designed by corporations whose business model depends on that insecurity.

Here are five key takeaways to act on today:

- ✔ Filters are not neutral — they encode a specific, narrow beauty standard and apply it to every face automatically

- ✔ Body dysmorphia rates have tripled since beauty filters became mainstream — the causal link is documented and clinical

- ✔ Men and boys are affected too — silence around male body image harm makes it more dangerous, not less present

- ✔ Regulation is arriving — Norway, France, the UK and the EU are already acting; more will follow

- ✔ Digital literacy is the most effective protection — show, demonstrate, and discuss rather than warn and restrict

Furthermore, this problem will not solve itself. Meta will not prioritise your child’s mental health over its advertising revenue. The filters will get more convincing. The standard will get more impossible. Therefore, the conversation has to happen at home, in schools, and in communities — now, honestly, and repeatedly.

Your next step: Tonight, have one genuine conversation with a young person in your life. Not a lecture. A question. “Do you ever feel worse about yourself after scrolling Instagram?” Their answer will tell you everything you need to know about next steps.

Connect with Devolity Business Solutions to build a digital literacy strategy for your school, organisation, or family that addresses the Instagram AI filter problem with the depth and honesty it demands.

Frequently Asked Questions

What is the Instagram AI filter scandal?

The Instagram AI filter scandal refers to the growing body of evidence that beauty-enhancing AI filters on Instagram and similar platforms are systematically damaging Gen Z mental health — particularly body image and self-worth. Internal Meta research, leaked in 2021, confirmed the company knew Instagram worsened body image for one in three teenage girls. Despite this evidence, beauty filters remained active and algorithmically promoted, prioritising advertising revenue over user wellbeing.

How do Instagram beauty filters cause body dysmorphia?

Beauty filters use AI facial mapping to restructure users’ faces in real time — enlarging eyes, slimming noses, smoothing skin, and narrowing jaws. When users see this altered face continuously, the brain begins to accept it as the baseline. The real, unfiltered face then appears wrong by comparison. This mechanism — internalising an AI-constructed appearance as the target and rejecting the natural face — is the clinical pathway to body dysmorphic disorder. Specifically, it has been named Snapchat dysmorphia by clinicians.

Which countries are banning Instagram AI beauty filters?

Norway requires mandatory disclosure labels on all retouched commercial images including filtered social media content. France extended its retouching disclosure laws to social media in 2023. The UK’s Online Safety Act 2023 mandates platform protections for minors including algorithmic content restrictions. The European Union’s Digital Services Act requires algorithmic transparency. The United States Kids Online Safety Act is currently in legislative review. Australia is developing its Online Safety Code with similar provisions.

Why are men and boys affected by Instagram beauty filters?

Men and boys are exposed to AI-enhanced male physique and facial content that presents an impossible standard as normal. The specific harm manifests as muscle dysmorphia — compulsive pursuit of an AI-normalised body — more commonly than as the facial-feature body dysmorphia seen in women. The harm is compounded by stigma: male body image vulnerability is culturally coded as weakness, so men rarely discuss or seek help for filter-related distress. Consequently, male filter harm is significantly underdiagnosed and underreported.

How do I teach my child about digital literacy and Instagram filters?

Start with demonstration rather than instruction. Show your child a beauty filter in action on your own face — activate it, then deactivate it, and discuss what changed. Make the technology visible and concrete. Additionally, practice the “what don’t I see?” question when viewing aspirational social media content together. Build critical consumption as a shared family habit. Furthermore, model unfiltered photography yourself — your own relationship with digital appearance is the most powerful curriculum your child has access to.

What is Snapchat dysmorphia?

Snapchat dysmorphia is a clinical term first published in JAMA Facial Plastic Surgery in 2018. It describes patients seeking cosmetic surgery specifically to replicate the appearance of their filtered selfies — requesting that their real face be made to look like its AI-altered version. The term originated with Snapchat filters but now encompasses all social media beauty filter platforms. It represents the most severe end of the Instagram AI filter body image harm spectrum and is being reported by plastic surgeons globally at increasing rates.

What is the movement fighting back against beauty filters?

The movement includes creator-led initiatives like the #NoFilter commitment, Dove’s explicit pledge to remove all digital body manipulation from their advertising globally, the clinical community’s formal calls for mandatory filter disclosure, and thousands of young people documenting their own recovery from filter-related body dysmorphia on social media. Furthermore, regulatory bodies in multiple countries are moving from voluntary guidelines to enforceable law. The movement is growing — and the evidence is increasingly on its side.

References and Authority Links

- American Psychological Association — Social Media and Adolescent Mental Health Advisory 2023

- JAMA Facial Plastic Surgery — Selfies, Dysmorphia and Social Media

- Royal College of Psychiatrists — Social Media and Young People’s Mental Health

- Dove Real Beauty — The Cost of Beauty Research

- Common Sense Media — Teens and Social Media Report 2023

- Wall Street Journal — Facebook Knows Instagram Is Toxic for Teen Girls

- NHS — Body Dysmorphic Disorder Overview

- Norwegian Ministry of Children — Digital Advertising Transparency Guidelines

- European Commission — Digital Services Act Overview

- NSPCC — Social Media and Young People’s Mental Health Research

Transform Business with Cloud

Devolity simplifies state management with automation, strong security, and detailed auditing.